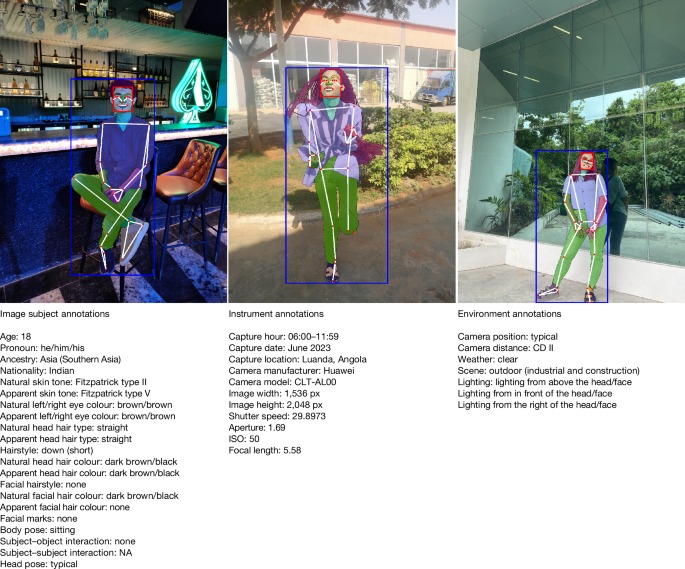

Fair human-centric image dataset for ethical AI benchmarking

In a groundbreaking development for the field of computer vision, researchers have introduced the Fair Human-Centric Image Benchmark (FHIBE), a comprehensive image dataset designed with a strong emphasis on ethical considerations. Published in the journal Nature, this innovative dataset addresses critical issues surrounding consent, privacy, compensation, safety, diversity, and overall utility in human-centric applications. The FHIBE aims to set a new standard for the ethical use of image data, particularly as the demand for fair and responsible AI systems continues to grow. By implementing best practices in its design, FHIBE allows researchers and developers to evaluate the fairness of their algorithms, ensuring that AI technologies are not only effective but also equitable.

The FHIBE dataset is particularly significant in light of increasing scrutiny over the use of biased data in AI systems, which can perpetuate stereotypes and reinforce social inequalities. For instance, traditional datasets often lack representation from diverse demographic groups, leading to skewed outcomes in applications such as facial recognition, medical imaging, and social media content moderation. The FHIBE addresses these shortcomings by incorporating a wide variety of images that reflect a broad spectrum of human experiences and backgrounds. This diversity not only enhances the dataset’s utility but also serves as a crucial step towards developing more inclusive AI systems that can operate fairly across different contexts.

Moreover, the FHIBE dataset is designed to be user-friendly, allowing researchers to easily integrate it into their existing workflows. By providing clear guidelines on ethical considerations, the dataset encourages responsible usage and promotes transparency in AI development. The introduction of FHIBE is a timely reminder of the responsibility that comes with technological advancement, emphasizing the need for a more ethical approach to AI research. As computer vision continues to evolve, the FHIBE stands out as a vital resource for fostering fairness and accountability, paving the way for a future where AI systems can be trusted to serve all members of society equitably.

https://www.youtube.com/watch?v=rhjJpki2wA4

Nature, Published online: 05 November 2025;

doi:10.1038/s41586-025-09716-2

The Fair Human-Centric Image Benchmark (FHIBE, pronounced ‘Feebee’)—an image dataset that implements best practices for consent, privacy, compensation, safety, diversity and utility—can be used responsibly as a fairness evaluation dataset for many human-centric computer vision applications.