Aligning machine and human visual representations across abstraction levels

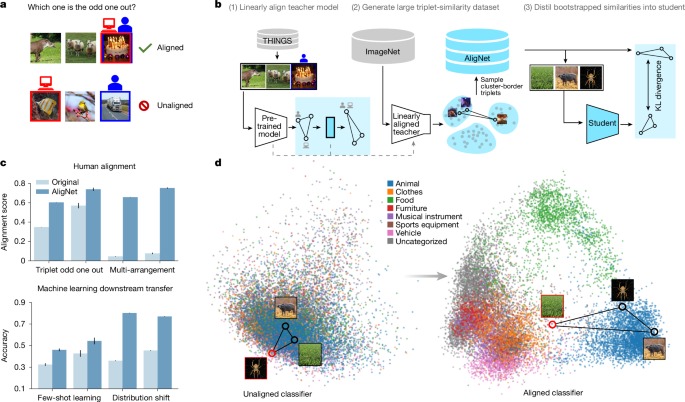

In a groundbreaking study published in *Nature*, researchers explore the alignment of foundation models with human judgments, revealing significant advancements in how these models can replicate human behavior and uncertainty across varying levels of visual abstraction. Foundation models, which are large-scale machine learning architectures trained on vast datasets, have shown impressive capabilities in tasks such as image recognition, natural language processing, and more. However, their alignment with human cognitive processes remains a challenge. This study addresses that gap by demonstrating that when these models are fine-tuned to incorporate human-like judgments, their performance improves in both accuracy and generalization.

The researchers conducted a series of experiments that involved training foundation models on datasets that included human annotations and preferences. By integrating human feedback into the training process, the models were able to better understand complex visual abstractions, such as distinguishing nuanced emotional expressions in images or interpreting intricate scenes with multiple elements. For example, when tasked with categorizing images based on emotional content, models that were aligned with human judgments showed a marked improvement in identifying subtle emotional cues compared to those trained solely on traditional datasets. This alignment not only enhanced the models’ ability to mimic human-like responses but also reduced their uncertainty in predictions, making them more reliable in real-world applications.

The implications of this research are profound, as it paves the way for more intuitive and human-centric AI systems. By ensuring that foundation models are reflective of human judgment, we can expect advancements in various fields, including healthcare, where AI can assist in diagnosing conditions by interpreting medical images with a human-like understanding of context. Furthermore, this approach can enhance user experience in areas such as virtual reality, gaming, and automated customer service, where understanding and predicting human behavior is crucial. As AI continues to evolve, aligning these technologies with human insights will be essential for fostering trust and efficacy in their applications. This study not only highlights the potential of foundation models but also sets a precedent for future research aimed at creating AI systems that resonate more profoundly with human users.

Nature, Published online: 12 November 2025;

doi:10.1038/s41586-025-09631-6

Aligning foundation models with human judgments enables them to more accurately approximate human behaviour and uncertainty across various levels of visual abstraction, while additionally improving their generalization performance.